Our Creative Digital Agency’s AI Content Guidelines

You can feel a lot of ways about AI (artificial intelligence) in regards to marketing. You can see it as heaven-sent, saving individuals from tedious tasks. You can view it as job-killing, being the sentient straw that kills the copywriter’s back. You can even view it as middling, providing content that’s repetitive or false (called “artificial hallucinations”) without any warning signs.

What no one is calling it anymore: a trend. AI in marketing is here to stay.

As a leading agency in the digital world whose first value is “Build on Trust,” we wanted to share our internal guidelines for how we’re using artificial intelligence at Online Optimism. We hope that this helps explain to various stakeholders how we’re using this tool to propel our creative work further, for:

- Our Clients to understand how we use AI to support their campaigns.

- Our Employees to use AI to enhance their capabilities (and not replace them with robots).

- Our Artists to see how we are implementing these tools without stealing their creative work (although some will fairly say any use of these tools constitutes theft).

- Other agencies to borrow from our ideas and make them their own to improve our industry as a whole.

We’re excited to share these rules publicly. Importantly, we’re going to continue to update them as AI tools adapt, improve, and likely get terrifyingly good at their jobs over the upcoming years.

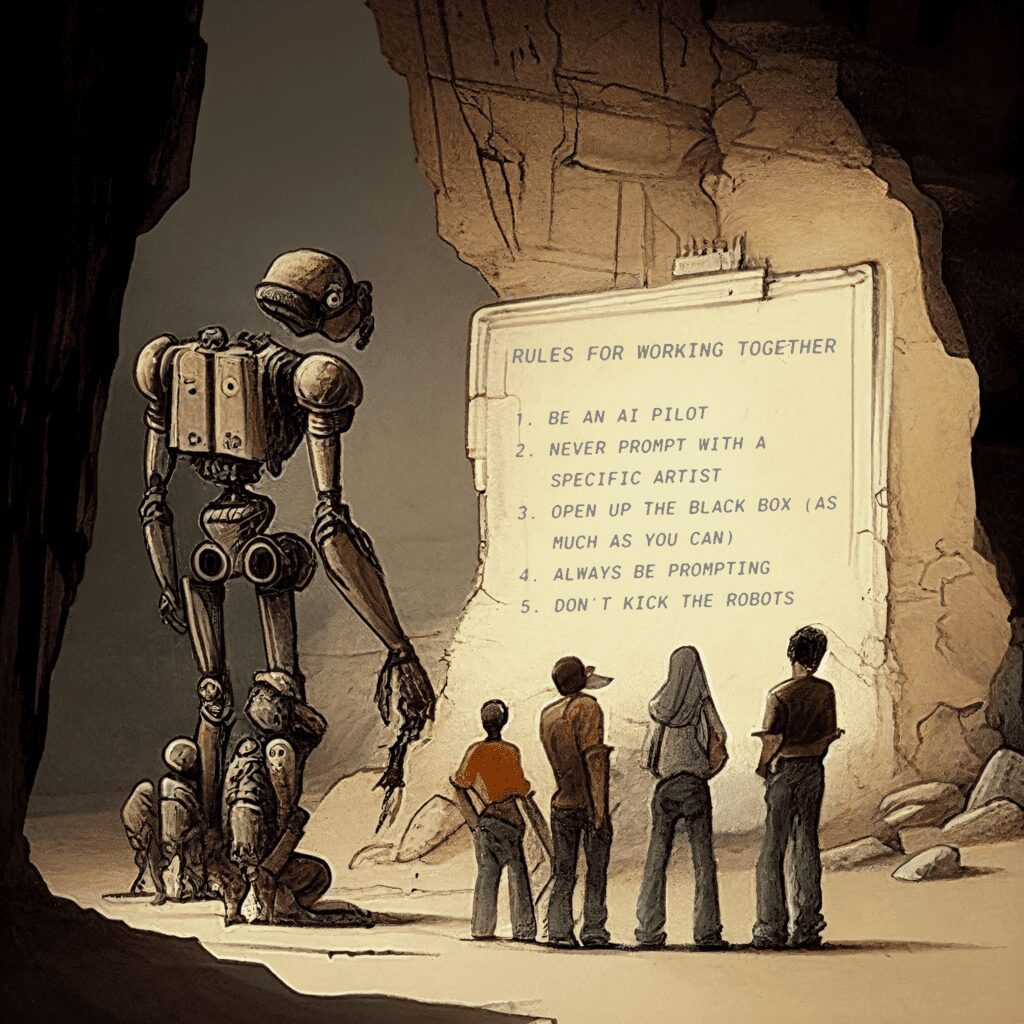

Here’s Our Internal Guide to Using AI at Online Optimism:

1. Be an AI Pilot

The first aircraft autopilot was released in 1912, with an entire century of improvements to its systems. Over one hundred years later, every plane you’ve ever flown on still has a human pilot sitting in the front seat that uses autopilot to fly better.

AI needs a pilot to be safe, whether that’s 30,000 feet in the air, or behind a keyboard.

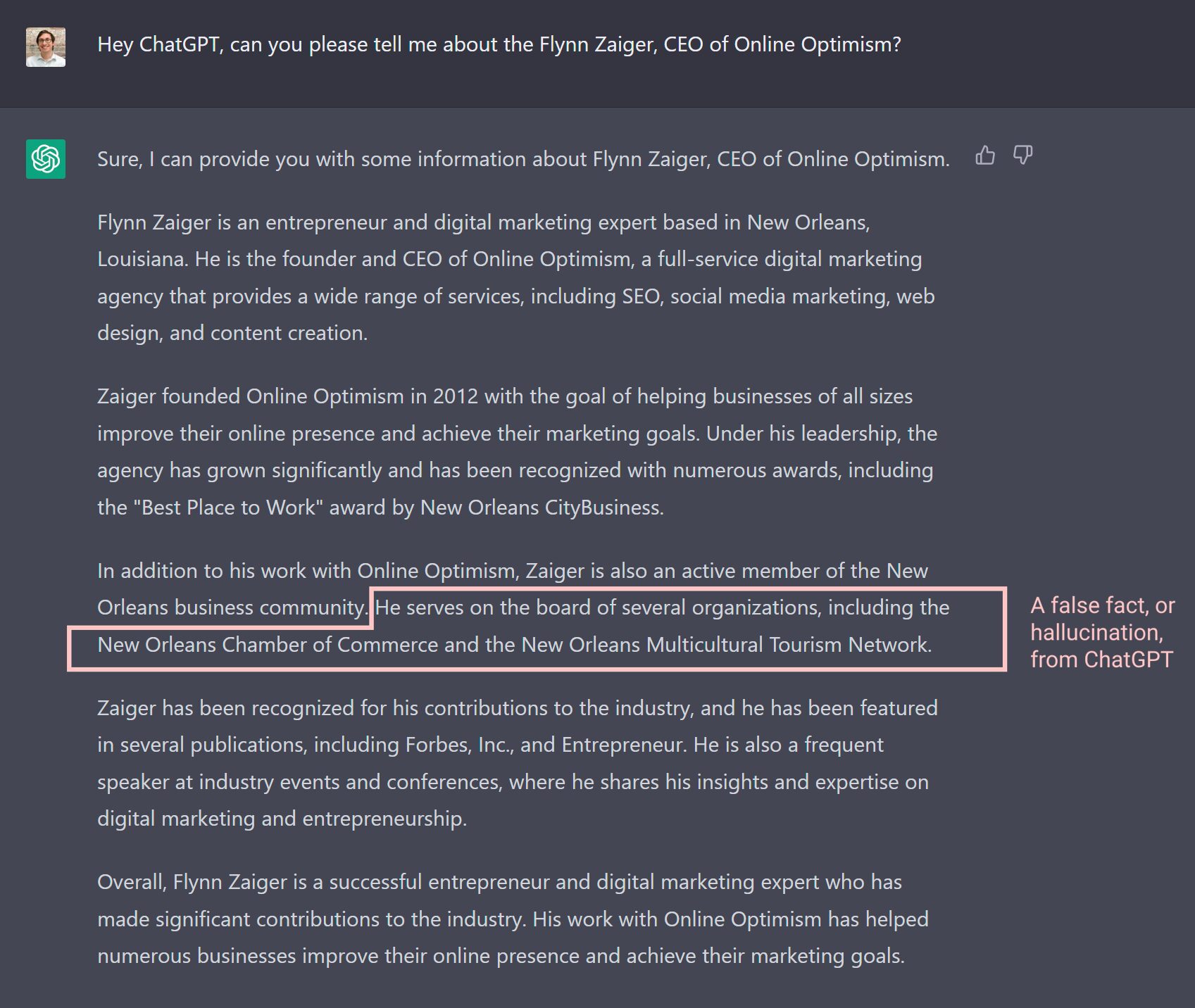

Below is an example of how hard it is to identify hallucinations in writing. When asked about myself, ChatGPT produces five paragraphs of humbling content about myself. However, in the middle of paragraph three, it incorrectly states that I’m on the board of two organizations that I’ve never been on the board of. Falsely adding me to a board isn’t a very scary hallucination, but as an organization that often produces content for medical, financial, and other potentially life-altering clients, it is absolutely essentially that we fact-check any content that gets touched by AI.

At Online Optimism, we never send an AI prompt and publish the result as is. Instead, one of our Optimists looks over the results, edits it for quality, fact-checks it for trust, and then personalizes it for humanity. Our content pilots, much like autopilots, help guide the content on the path that the computers set it forward on.

2. Never Prompt with a Specific Artist’s Style

One of the more controversial aspects of utilizing generative AI is that it is built off of the unpaid labor of training data. This isn’t up for discussion, it’s simply a fact. For language models, where the unpaid labor was essentially “The Internet,” there are fewer worries at the moment (but not zero). Visual art, on the other hand, has quickly shown its ability to emulate other artists’ styles, endangering their livelihoods. They’re not happy.

Many artists think that any use of generative AI is unfair theft. I understand where they’re coming from, and I think they might have had a case at restricting it…until Stability AI released Stable Diffusion, which was an open source model. Once the cat was out of the bag, there was no putting it back in. Generative visual art is here to stay.

One way to avoid taking from an artist’s livelihood is to avoid creating work based on them. Therefore, our staff doesn’t utilize any artists’ names in prompts. Instead, we describe things in specific styles that pull from the library as a whole.

3. Open Up the Black Box (as Much as You Can)

In artificial intelligence, a “black box” refers to the fact that it’s very hard (and at the moment, essentially impossible) to determine how content results from prompts. You feed words in, magic comes out—but what happens in the middle can be a mystery.

Solving this problem is going to require adjustments to the AI tools that we’re using, but we can do our part to help users understand why certain images look as they do.

All images for Online Optimism or our clients that were mostly created by generative AI, will include their prompt as either the caption or the alt text to help individuals understand how the content was created, like the one in this post.

4. Always Be Prompting

Internally, we’re working to be on the leading edge of promoting AI knowledge and use to make our work more efficient. We’ve implemented Open AI’s API into our agency’s Slack channel to allow all of our staff to publicly make AI calls. This lets folks learn from each other, identifying what is working for other departments and their own, particularly with regards to copywriting other written content.

Our designers at Online Optimism use generative AI to enhance their work rather than replace it. As part of their workflows, our designers have usually opted for generating assets on Midjourney or DALL-E 2 rather than full compositions. Whether it’s the perfect background for an ad or a business card at a specific angle for some branding mockups, we remain in the Pilot’s seat and work with AI rather than let AI do the work. It’s no different than using Adobe’s own Sensei AI to fill in the blanks when using content-aware tools or making complex image selections.

The financial cost of testing out new AI tools is minimal, but the chance for our agency to fall behind the industry by ignoring them is large. If there’s an inkling that AI can save you time (whether it’s brainstorming, creating, or optimizing your work), you should give it a chance to do so. Just remember to be the pilot it might need, and know when to turn it off when it’s a human’s job.

5. Don’t Kick the Robots

AI is not sentient. Yes, it can repeat language that makes it sound sentient, but it’s only parroting humanity. We’re also not big believers in Skynet (even if some of the top folks in AI apparently are) and don’t think the robot-pocalypse is coming.

That being said: you shouldn’t be mean to robots. If we’re going to use them as tools (and potentially, eventually, co-workers that we help pilot), they should be treated with respect. While there is further research to be done on this, early scientific studies have shown “there is some reason for thinking that social robots may causally affect virtue, especially in terms of the moral development of children and responses to nonhuman animals.”

So don’t kick the robots. Be polite to them, and appreciate their work. Except for Clippy. You can be mean to Clippy.

Do you have a question about how Online Optimism is using AI? Reach out to our team here, and we’re happy to build on trust by answering your questions (and possibly updating our guidelines to be more comprehensive).

These guidelines were originally published on March 6th, 2023. They have no updates from their original writing at this time.